Introducing Sim2h

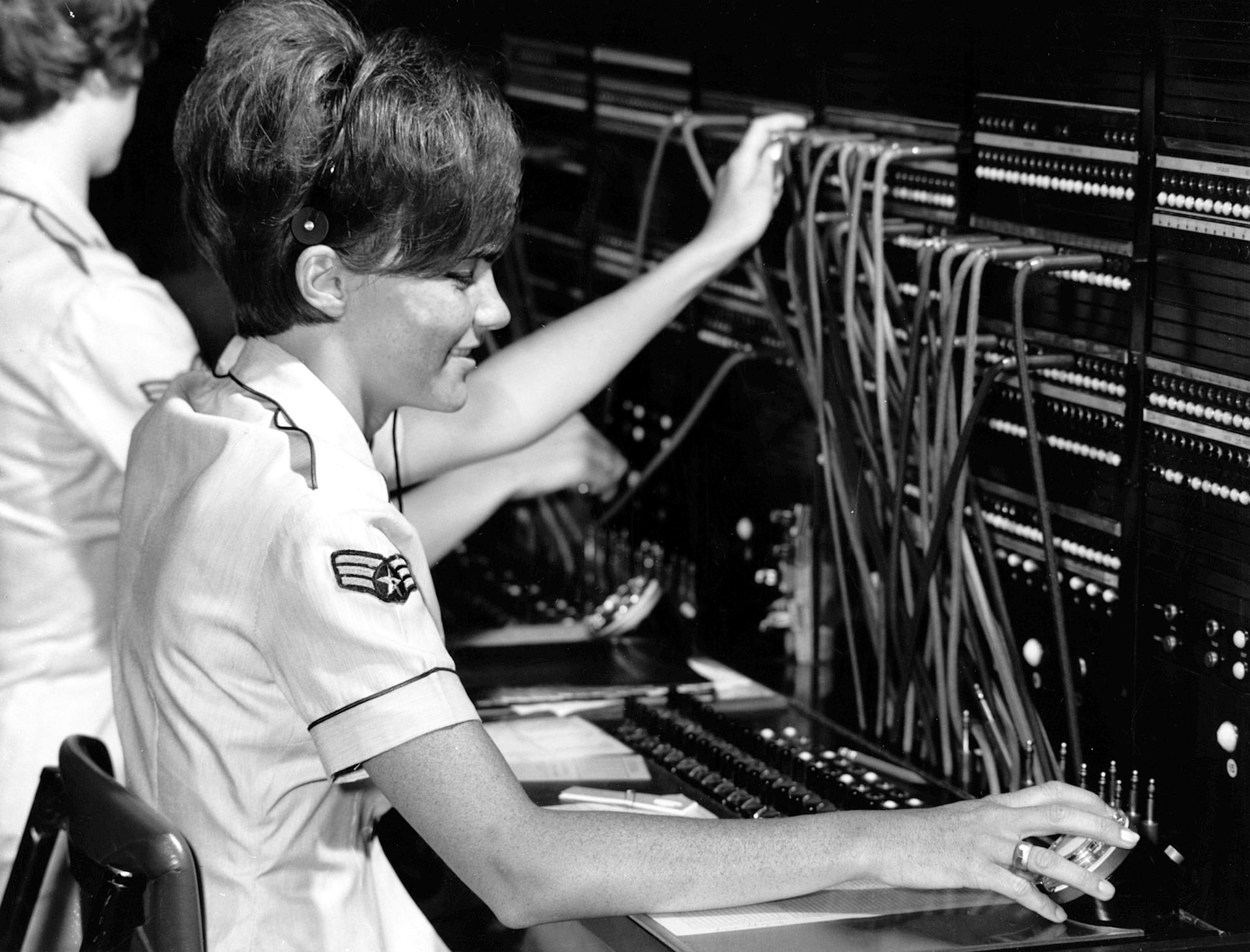

Holochain’s simple “switch-board” networking implementation

Three weeks ago, we introduced sim1h as a centralized test networking alternative and described how this helps debugging efforts both for the integration of the Holochain technology stack (hApps on top of Holochain core on top of our networking implementation) and for hApp developers debugging their hApps. In that post we also described how this approach of mocking the network with a centralized component provides us with an evolution path of iteratively pulling in aspects of lib3h as they’re ready -- instead of trying to conquer all of the inherent complexity in a single iteration.

Sim1h was our first stab at it and over the course of the past three weeks we have come a long way down that proposed evolution path so that we can present the next stage now: sim2h.

Why is this important?

Before we dive into the differences between sim1h and sim2h and a discussion of how you can make use of sim2h, I want to highlight why we are excited about this milestone and why you might be as well:

Sim2h allows us to both run early public test instances of the full Holochain stack and also invite hApp developers to try their hApps over the Internet and help us collect performance data.

In other words, sim2h marks the first point in time, since we abandoned the Go prototype, at which anyone (developers and non-developers) can run alpha test releases of hApps over the public internet!

So what is sim2h?

There is one thing that sim1h and sim2h have in common: both rely on a central point connecting the agents -- but that is about it.

While sim1h is very similar to contemporary centralized front-end / back-end architecture in that it uses a database as the central component, sim2h does not rely on any centralized data persistence! Instead it plays a role similar to these old telephone switch-boards where an operator takes a request of a network participant and sets up the appropriate connection to another participant (without storing or controlling what those participants say on that line).

Interestingly, this actually reduces complexity compared to the sim1h solution where the network had to be simulated inside a database which meant actually storing messages and then polling for messages on the other end. The concept of a switch-board, which maintains active websocket connections to each agent that is online, works much better with all the assumptions that have been made in other parts of the Holochain stack -- mainly Holochain core, as well as lib3h. In fact, that central component of sim2h borrows a lot from already existing pieces and in essence is like the in-memory network that we’ve used in core for local testing of multiple instances (i.e. mocking the network in-memory). In other words, Sim2h uses the websocket implementation from lib3h to create encrypted “wormholes” between each Holochain conductor instance and this central “in-memory” switch-board.

The result is that we have a single point to route all Holochain network messages to their according recipient.

But with that said, all of the other specific and important aspects of the Holochain network are still present including:

- Entries/data are only stored at Holochain nodes/instances (not in the central component)

- Every entry is validated by a Holochain node/instances (not the switch-board)

- Holochain’s overlay-network (where each agent is addressed with its agent_id, i.e. its public key) is the topology that core instances see and use to talk to each other

- It is not possible to impersonate an agent/user without access to their keys and source chain and neither are stored at the central component. (That means the principles of our Cryptographic Autonomy License still apply despite the centralized nature of sim2h.)

Note that the above bullet points are not true for sim1h, as messages (as well as entry data) is stored in the centralized database and that’s what nodes rely on. Getting rid of that database both gets us much closer to the network structure that Holochain is built for and also has avery important pragmatic benefit: the central component doesn’t need to be protected the way a database would need to as it doesn’t manage any persistent state.

The database sim1h uses can’t just be made available publicly on the internet -- even for testing purposes -- without asking for trouble. Sim2h on the other side just facilitates connections between nodes of the same DNA. The state of the DHT is stored exclusively in all the nodes at the edges. Any change has to pass through the DNAs validation rules the same way it would in a fully peer-to-peer setting.

This difference is precisely what enables us to use sim2h right now for our first public Holochain test network. This is also an important prerequisite for Holo’s first test network.

That said, the fact that all messages are routed through a single point creates a single point of failure, which surely is not what you would want for a production ready p2p framework. But it actually is very beneficial for test networks, as already described in the post about sim1h. That’s because, in all alpha test networks, and potentially in early betas, we definitely want to be able to log network messages as needed in order to gain a global view for debugging. So we believe the single point of failure is a reasonable trade-off (during alpha and early beta phases) for the ability to analyse the network. And should that central point actually experience issues, we can spawn new machines without even managing any application data, since that is stored at the node level.

How can you use sim2h?

We currently have a public sim2h switch-board running on wss://sim2h.holochain.org:9000. Holoscape since v0.0.2 (and moving forward, current latest is v0.0.3) offers this sim2h server URL as the default network configuration which makes sim2h easily available for any hApp and everyone who can install Holoscape (currently only macOS and Linux).

Using the public sim2h switch-board

Please Note: Only use the public server instance at sim2h.holochain.org if you are: 1) willing to participate in early tests of the whole integration and 2)willing to have all network messages (including data of any committed and published entries) being logged for the purpose of debugging!

Our main intention behind offering this server is to invite hApp developers into early testing phases and to see how all parts of the system perform under real-world conditions. I’m sure we will discover bugs and edge-cases., And we don’t guarantee this server to be up an running 100% of the time but any hApp community that is willing to try this early alpha network and provide us with feedback will help us speed-up this process a lot!

Just configure your conductor to use this network configuration:

[network]

type=”sim2h”

sim2h_url=”wss://sim2h.holochain.org:9000”Or stick to Holoscape’s default which will pass exactly that same configuration to the conductor process.

Running your own switch-board server

If you don’t want to use the public server, you can run a sim2h server instance yourself. There are several reasons to do this: Maybe because you don’t want to have your hApp’s data show up in our logs, or if you want to capture your own logs of what is happening in the network while running your hApp in order to debug your DNA.,

Or maybe you just want to run your own “in-house” test networks for controlled test settings and with real networking.

To do so, you can use the Nix shell configuration from the holochain-rust repository and run the according command:

cd holochain-rust

nix-shell --run hc-sim2h-serverThis will run a switch-board listening for incoming connections on the default port 9000.

Alternatively, you can also manually compile and install the executable (sim2h_server will be included in future releases of Holonix as well as published to crates.io to enable quick and easy installation) -- and also pass more command line options:

- To see meta-data of exchanged messages, you can run it with RUST_LOG=debug sim2h_server.

- To change the port use the argument -p <port number>.

- In order to have sim2h save all incoming and outgoing network messages, provide the argument -m <path to log file>. This will create a CSV file with one message per line, containing the time, direction (in/out), agent ID (public key), URL/address of the node communicated with and the message serialized into a string.

In order to use your server, you would need to configure the conductors of every client to have the parameter sim2h_url in the network configuration point to your server and its sim2h port.

But since this would be a conductor-wide (i.e. installation) change which would affect every running DNA instance on that node, there is another way that allows you to deploy your DNA and have it run alongside other DNAs inside the user’s conductor pointing to different switch-board servers. This DNA specific setting is using DNA properties to include the sim2h server URL within the DNA. Since the latest Holochain release v0.0.36-alpha1 (included in Holoscape v0.0.3) there is a special DNA property name “sim2h_url” that, if set, overrides the conductor wide sim2h_url configuration variable with the value of this property.

You set properties in the root JSON manifest file of your DNA. See this example:

{

"name": "My Example DNA",

"description": "This DNA shows how to override the conductor wide sim2h URL by DNA",

"version": "0.1",

"uuid": "00000000-0000-0000-0000-000000000000",

"dna_spec_version": "2.0",

"properties": {

"sim2h_url": "wss://sim2h.my-example-server.invald:9000"

}

}What’s next?

The current implementation of the sim2h switch-board broadcasts new entries to all nodes and makes sure that a new node coming online will receive all entries it has missed while being offline. Sim2h does this by asking other nodes what they hold, keeping an in-memory cache of all addresses of all entries in the network that is has seen and then comparing the holding and authoring lists of nodes. What this means is that currently sim2h orchestrates the network to have a full copy of all the entry data at each node - i.e. a full-sync or mirror DHT.

Unfortunately, since each DNA agent requires a separate sim2h websocket connection, each server is theoretically limited (by centralized server port constraints) to communicating with about 65,000 agents. Our next steps are related to overcoming this limitation.

The next step that is already being worked on, is to pull in more lib3h code and implement rrDHT style sharding with sim2h. This is a good example of the benefit that this approach gives us: we can test out high-level assumptions and algorithms just by changing the gossip logic within the sim2h server, which has all the needed information within its local transient memory. It is much easier to iterate on different aspects of the networking implementation and get each aspect (like the sharding algorithms) right, before jumping to a fully decentralized network which is just much harder to analyse.

I want to close by expressing my personal excitement about the fact that with sim2h we don’t have to wait any longer until all aspects of lib3h are proven out and ready to be used as a whole. Instead, we can shift into a much more agile pattern and work more closely together with the community of hApp developers, since now (after more than a year of waiting for the “Rust refactor”) we have a fully working Holochain stack again.

Going forward from here, we can drive the build-out of the last missing pieces for the concrete and specific needs of all you hApp developers out there, and stay in close feedback-loops with you to ensure we’re meeting all of your needs. In other words, we want to hear from you!